TikTok Enabling Spread Of Election Conspiracy Disinformation

Reprinted with permission from MediaMatters

Video-sharing social media network TikTok has largely been responsive to removing specific instances of misinformation reported on its platform -- but has not taken sufficient action against accounts that repeatedly peddle election-related lies. As a result, the platform appears to be struggling to control the overall spread of election and voting conspiracy theories at a critical time when ballots are still being counted and the American people need clarity.

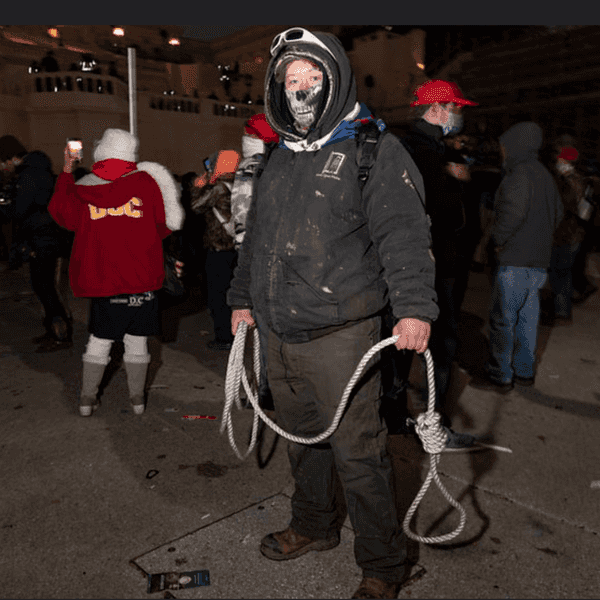

A lack of consistent enforcement of the company's community standards has made TikTok a hub for election misinformation, a problem that can have dangerous real-life consequences. A plot to attack a Philadelphia vote counting location was stopped by police this week and the armed suspect's vehicle was reportedly decorated with a window decal supporting the QAnon conspiracy theory, which has spread widely on social media networks in recent years. Unfounded conspiracy theories about mass voter fraud are now circulating on social media, emboldening far-right groups and individuals protesting outside election centers -- and TikTok has played a role in enabling the rapid spread of these dangerous misinformation campaigns.

Media Matters previously reported on a QAnon election conspiracy theory which spread on the platform claiming that President Donald Trump secretly worked with the Department of Homeland Security to watermark legitimate mail-in ballots ahead of Election Day in order to prove that Democrats are committing mass voter fraud by submitting fake ballots that lacked a watermark. TikTok almost immediately removed the identified videos that were pushing this absurd theory, yet when searching the very next day for "watermark ballots" on the platform, nearly all of the resulting top videos showed users peddling the conspiracy theory, as well as other viral videos pushing the false claim.

This example is just one illustration of why TikTok must go further -- by more proactively monitoring and addressing several aspects of the platform to limit the proliferation of sometimes dangerous falsehoods and conspiracy theories.

TikTok's algorithm allows small, unknown accounts to quickly spread viral misinformation

When it comes to reach on TikTok, a user's follower count does not necessarily reflect how far their content will spread. Coordinated misinformation campaigns from relatively small accounts have spread quickly across the platform because of the site's design and algorithm.

The platform provides users with two content feeds: one that displays videos from accounts they are following, and one for content curated by TikTok's algorithm. The curated page, known as the "For You feed," is based on users' interactions, video information, and device and account settings. As TikTok explains:

While a video is likely to receive more views if posted by an account that has more followers, by virtue of that account having built up a larger follower base, neither follower count nor whether the account has had previous high-performing videos are direct factors in the recommendation system.

This means that the platform cannot simply delete a single video to stop the spread of viral election conspiracy theories; TikTok must also take the next step in enforcing its content policies and begin thoroughly removing accounts that have repeatedly shared election misinformation.

TikTok has not taken sufficient action against serial election misinformers

Media Matters has identified a number of repeat offender accounts that TikTok has yet to terminate despite strong patterns of spreading election misinformation.

Without taking such action, serial election misinformation peddlers are able to repeatedly push dangerous conspiracy theories on the platform. TikTok must commit to cut election misinformation at its roots when there is a clear pattern of repeated misinformation spread, rather than playing whack-a-mole with individual videos.

While this is not a comprehensive list of known TikTok misinformers, it does highlight how TikTok's existing enforcement process has enabled the spread of misinformation about voting and the 2020 election:

thislilcoop

TikTok did not remove this example first highlighted in our report, but instead added an election information banner and lowered discoverability to this user's video which suggests that Democratic nominee Joe Biden is "calling all the dead to rise and vote since TRUMP WON," with boxes labeled "EMERGENCY DEMOCRAT VOTES."

The account continued to post baseless election misinformation suggesting that Democrats are planting fraudulent ballots in Michigan.

trishayarrington

TikTok removed this user's previous election misinformation video claiming that Biden received "magic ballots" overnight in Michigan, but allowed their account to remain active. Subsequently, the user has posted more election misinformation, including promoting the discredited "Sharpie" voting conspiracy theory.

bstone412

This user has repeatedly posted about their videos being removed from the platform for pushing election misinformation, but the account remains active and continues to spread false claims "about the voter fraud that's going on all over the country" and specifically citing Pennsylvania, where ballots are still being counted to decide the election.

unfiltered..conservative

TikTok removed this user's video falsely claiming that there is mass mail-in ballot fraud in Michigan working in Biden's favor, but allowed the account to remain active. Since then, the user has uploaded more election misinformation and complained about their videos being removed.

Kimmienc

This user had their account with over 60,000 followers removed for spreading election misinformation, including the unfounded watermark conspiracy theory, but already has two backup TikTok accounts to evade the ban.

TikTok is full of other serial election misinformers as well

boomerjustin

This user perpetuated the conspiracy theory about ballots for Biden receiving magically appearing in Michigan, as well as the QAnon-related watermark ballot conspiracy theory.

This user was also previously highlighted in a previous Media Matters report and identified as a QAnon promoting account.

brewer65

This user is pushing a variation of right-wing media's conspiracy theory about states allegedly counting more votes than they had registered voters before Election Day.

They have also been identified as a QAnon account.

mistywiseman

This user -- who also appears to be affiliated with the QAnon conspiracy theory -- has only a few thousand followers. But the account has pushed multiple election-related conspiracy theories on TikTok, including promoting false claims about watermarked ballots (in a now-removed video) and more election misinformation about the use of Sharpies to fill out ballots.

courtney.rebecca2

This user repeatedly pushed the watermarked ballot conspiracy theory, even after their first election misinformation video was deleted.

- #EndorseThis: Sarah Cooper Answers Trump's Attack On TikTok ... ›

- Best-Run Election In Decades — But Most Republicans Distrust The Result - National Memo ›